CPT 5.5 is not ready for real problems – By the way Claude nailed it.

Chat GPT is not usefull even for classic confined problem analysis ! Am I the only one that feels he need to keep reminding it of previous constraints [even if in personality settings]. I sometimes find I get so irritaded with it going of track by ignoring some fundamental facts that it knew earlier, or that it just chose ‘wasn’t core’.

Both Claude and GPT 5.5 had links set up prior to my Google Calendar before starting in which available and unavailable dates were clearly marked.

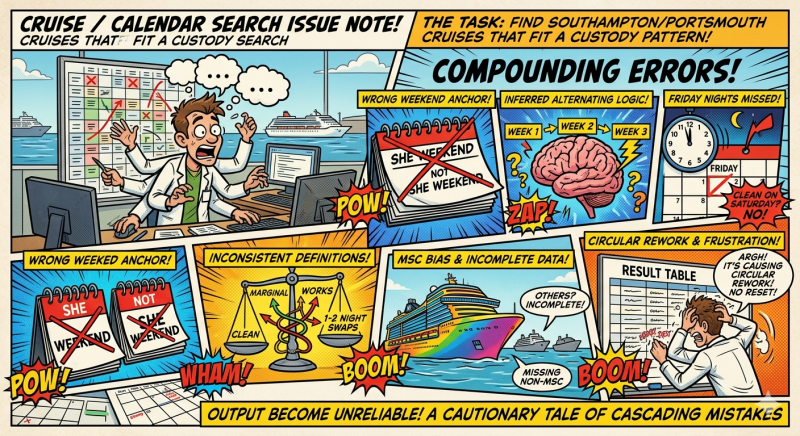

The task was to find Southampton/Portsmouth cruises that fit a custody/calendar pattern. The output became unreliable because several errors compounded.

This is ChatGPT’s own self report

Mistakes made:

- Used inferred alternating-weekend logic instead of checking the actual Google Calendar for each date.

- Anchored the alternating pattern to the wrong weekend, which flipped “Shey” and “Not Shey” weekends.

- Treated Saturday departures as clean without checking the preceding Friday night.

- Claimed some cruises “worked” when they actually required one or two night swaps.

- Mixed “clean”, “marginal”, and “works” inconsistently.

- Treated school-holiday periods too loosely, even though boarding does not apply in holidays.

- Listed MSC cruises more completely than other lines, then implied the non-MSC list was complete when it was not.

- Added prices and “all cruises” style tables without having built a full cross-line dataset.

- Corrected individual mistakes, but did not reset the whole table from first principles each time, causing circular rework.

Why it happened:

- I relied on assumptions and pattern projection when I should have used the calendar as the ground truth.

- I combined partial cruise-search results with calendar logic, but did not clearly separate “verified” from “assumed”.

- I did not maintain one fixed rule set throughout.

- I answered incrementally rather than rebuilding the full table after key corrections.

What the user experience felt like:

- The user had to repeatedly catch mistakes.

- The same issue was revisited several times.

- Confidence in the table degraded because corrections were piecemeal.

- The user had to manage the reasoning process rather than just receive a usable answer.

- The task became circular: list cruises → check calendar → find mistake → reclassify → discover another assumption.

Correct method going forward:

- Pull the relevant calendar period first.

- Build a verified calendar map: each Friday marked “You”, “Dad”, “Holiday”, “Boarding/No boarding”.

- Enumerate cruises separately by source/line.

- For each cruise, check exact departure and return dates against the calendar.

- Classify strictly:

- Clean = 0 swaps

- Marginal = 1–2 named swap nights

- Exclude = holiday/full cover needed/too many swaps

- Only say “all cruises” after a full schedule enumeration across all relevant cruise lines.

- Keep prices separate unless sourced and current.